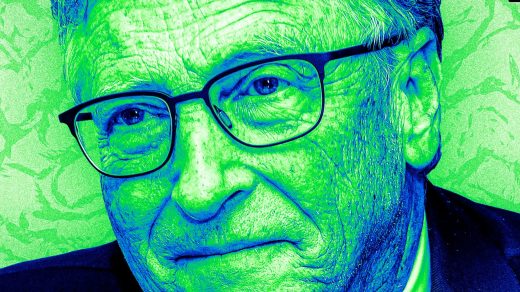

Why Bill Gates believes AI superintelligence will require some self-awareness

Why Bill Gates believes AI superintelligence will require some self-awareness

Current systems display ‘genius’ but need strategies for thinking through problems, the Microsoft cofounder says.

Welcome to AI Decoded, Fast Company’s weekly newsletter that breaks down the most important news in the world of AI. You can sign up to receive this newsletter every week here.

Bill Gates says “metacognition” is AI’s next frontier

Reporting on and writing about AI has given me a whole new appreciation of how flat-out amazing our human brains are. While large language models (LLMs) are impressive, they lack whole dimensions of thought that we humans take for granted. Bill Gates hit on this idea last week on the Next Big Idea Club podcast. Speaking to host Rufus Griscom, Gates talked at length about “metacognition,” which refers to a system that can think about its own thinking. Gate defined metacognition as the ability to “think about a problem in a broad sense and step back and say, Okay, how important is this to answer? How could I check my answer, and what external tools would help me with this?”

The Microsoft founder said the overall “cognitive strategy” of existing LLMs like GPT-4 or Llama was still lacking in sophistication. “It’s just generating through constant computation each token and sequence, and it’s mind-blowing that that works at all,” Gates said. “It does not step back like a human and think, Okay, I’m gonna write this paper and here’s what I want to cover; okay, I’ll put some text in here, and here’s what I want to do for the summary.”

Gates believes that AI researchers’ go-to method of making LLMs perform better—supersizing their training data and compute power—will only yield a couple more big leaps forward. After that, AI researchers will have to employ metacognition strategies to teach AI models to think smarter, not harder.

Metacognition research may be the key to fixing LLMs’ most vexing problem: their reliability and accuracy, Gates said. “This technology . . . will reach superhuman levels; we’re not there today, if you put in the reliability constraint,” he said. “A lot of the new work is adding a level of metacognition that, done properly, will solve the sort of erratic nature of the genius.”

How the Supreme Court’s landmark Chevron ruling will affect tech and AI

The implications of the Supreme Court’s Chevron decision Friday are becoming clearer this week, including what it means for the future of AI. In Loper Bright v. Raimondo, the court reversed the “Chevron Doctrine, which required courts to respect federal agencies’ (reasonable) interpretations of regulations that don’t directly address the issue at the center of a dispute. In essence, SCOTUS decided that the judiciary is better equipped (and perhaps less politically motivated) than executive branch agencies to fill in the legal ambiguities of laws passed by Congress. There may be some truth to that, but the counter-argument is that the agencies have years of subject matter and industry expertise, which enables them to interpret the intentions of Congress and settle disputes more effectively.

As Axios’s Scott Rosenberg points out, the removal of the Chevron Doctrine may make passing meaningful federal AI regulation much harder. Chevron allowed Congress to define regulations as sets of general directives, and left it to the experts at the agencies to define the specific rules and settle disputes on a case-by-case basis at the implementation and enforcement level. Now, it’ll be on Congress to hash out the fine points of the law in advance, doing their best to anticipate disputes that might arise in the future. And that might be especially difficult with a young and fast-moving industry like AI. In a post-Chevron world, if Congress passes AI regulation, it’ll be the courts that interpret the law from now, but when the industry, technology, and players will likely have radically changed.

But there’s no guarantee that the courts will rise to the challenge. Just look at the high court’s decision to effectively punt on the constitutionality of Texas and Florida regulations governing social networks’ content moderation. “Their unwillingness to resolve such disputes over social media—a well-established technology—is troubling given the rise of AI, which may present even thornier legal and Constitutional questions,” Mercatus Center AI researcher Dean Ball points out.

Figma’s new AI feature appears to have reproduced Apple designs

The design app maker Figma has temporarily disabled its newly launched “Make Design” feature after a user found that the tool generates weather app UX designs that look strikingly similar to that of Apple’s Weather app. Such close copying by generative AI models often suggests that the AI’s training data was thin in a particular area causing it to rely too heavily on a single, recognizable piece of training data, in this case Apple’s designs.

But Figma CEO Dylan Field denies that his product was exposed to other app designs during its training. “As we have explained publicly, the feature uses off-the-shelf LLMs, combined with design systems we commissioned to be used by these models,” Field said on X. “The problem with this approach . . . is that variability is too low.”

Translation: The systems powering “Make Design” were insufficiently trained, but it wasn’t Figma’s fault.

Want exclusive reporting and trend analysis on technology, business innovation, future of work, and design? Sign up for Fast Company Premium.

ABOUT THE AUTHOR

(10)